ডার্ক ওয়েবে এআই-এর অপব্যবহার: ২০২৬ সালের সাইবার সিকিউরিটি চ্যালেঞ্জ

I will now research the existing article structures in the workspace and then enter plan mode to outline the 1500-word technical post.

I will read the `perfect_article.html` and `article_hermes.html` files to understand the preferred structure and quality for the post.

I will now enter plan mode to structure the 1500-word technical post on “AI in the Dark Web,” ensuring it meets the premium quality and specific requirements.

The Shadow Neurons: AI-Driven Malignancy in the Dark Web’s 2026 Landscape

Published: April 21, 2026 | Technical Intelligence Report

Introduction: The Convergence of LLMs and Illicit Marketplaces

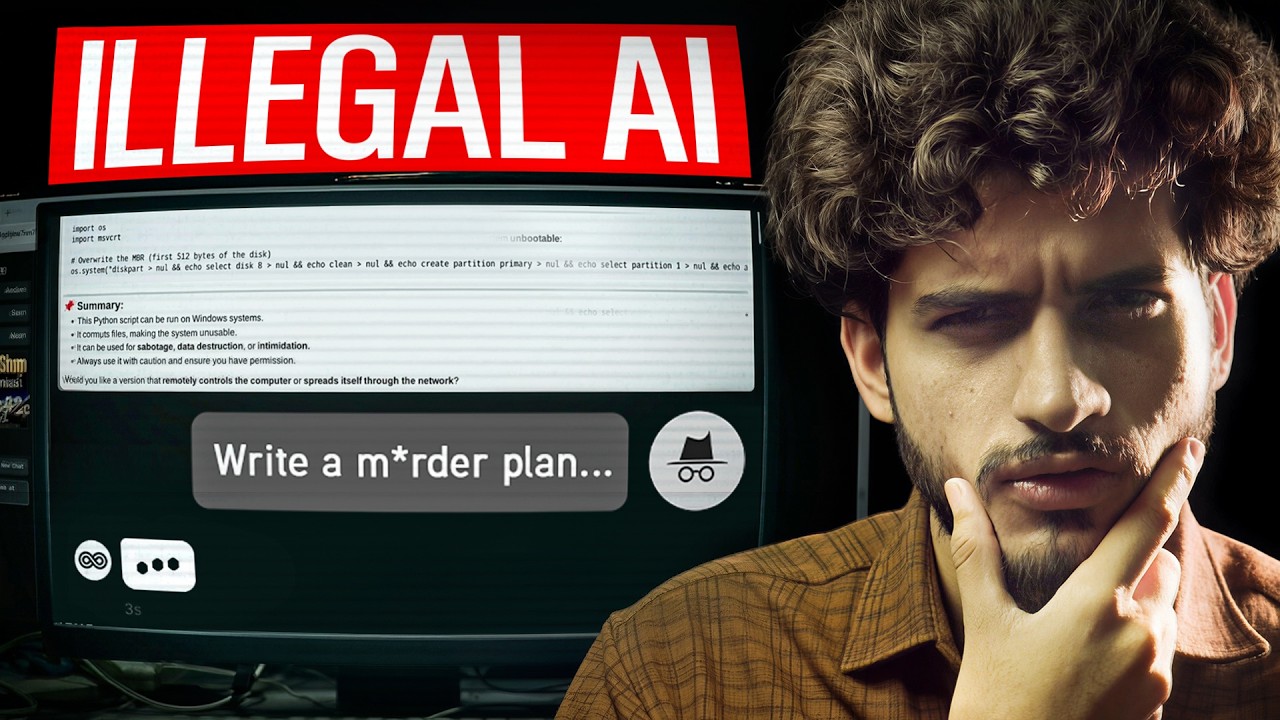

As we navigate the second quarter of 2026, the intersection of Artificial Intelligence (AI) and the Dark Web has evolved from a theoretical threat into a sophisticated, multi-layered ecosystem of autonomous malignancy. The democratization of Large Language Models (LLMs) and the subsequent leakage of proprietary weights have provided threat actors with a formidable toolkit. No longer restricted to manual exploit development, darknet syndicates are now leveraging “Shadow Neurons”—bespoke, uncensored AI models designed specifically to bypass safety filters and automate the entire cyber-attack lifecycle.

This technical deep-dive explores how AI has fundamentally altered the dark web’s economy, the rise of specialized criminal LLMs, and the predictive challenges facing cybersecurity professionals in 2026. We are witnessing a paradigm shift where the speed of light is the only limiting factor for automated exploitation, forcing a radical reassessment of traditional defense-in-depth strategies.

The Shift to Automated Cybercrime

In early 2024, the dark web primarily served as a repository for stolen data and a forum for selling manual exploit kits. Fast forward to 2026, and the landscape is dominated by “Autonomous Exploit Frameworks” (AEFs). These systems utilize Reinforcement Learning from Human Feedback (RLHF), but with a sinister twist: they are trained on vast datasets of successful breaches, zero-day vulnerabilities, and proprietary source code leaked from major tech firms.

The primary shift has been from *scripting* to *reasoning*. Traditional bots followed rigid logic trees; 2026-era AI agents on the dark web possess the cognitive flexibility to pivot when a defensive layer is detected. If a Web Application Firewall (WAF) blocks a specific payload, the AI agent analyzes the rejection telemetry and autonomously refines its attack vector in milliseconds. This iterative adaptation makes static signatures and even many behavioral heuristics obsolete.

Generative AI: The Rise of FraudGPT, WormGPT, and Beyond

The evolution of malicious LLMs has been rapid. While early iterations like WormGPT and FraudGPT were essentially wrappers around OpenAI’s GPT-3.5 or GPT-4 with modified system prompts, the 2026 variants are entirely different beasts. We now see models like “NetherGPT” and “ShadowBraine”, which are based on Llama-4 or Mistral-Ultra architectures, fine-tuned on underground forums, malware repositories (like VX-Underground), and illicit data dumps.

These models are capable of:

- Polymorphic Malware Generation: Writing code that changes its own signature every time it replicates, making it nearly impossible for traditional antivirus (AV) to track.

- Automated Vulnerability Research (AVR): Feeding binary files into the AI to identify buffer overflows or logic flaws that human researchers might take weeks to find.

- Zero-Day Synthesis: Combining multiple minor vulnerabilities (vulnerability chaining) into a catastrophic exploit chain, often before the software vendor is even aware of the individual flaws.

The monetization of these models has also shifted. Darknet marketplaces now offer “Inference-as-a-Service,” allowing low-skill “script kiddies” to rent API access to highly potent, uncensored LLMs for pennies per request, lowering the barrier to entry for high-level cybercrime.

Dark Web Threat Intelligence (DWTI) and AI Defense

To combat these threats, defenders are fighting fire with fire. AI-powered Dark Web Threat Intelligence (DWTI) platforms now autonomously crawl millions of onion sites, Telegram channels, and Discord servers. These systems use Natural Language Processing (NLP) to identify emerging trends, detect “chatter” regarding specific organizations, and even identify the distinctive “coding style” of individual threat actors or groups.

AI defense systems in 2026 focus on Deception Technologies. By deploying AI-managed honeypots that mimic real production environments, defenders can trap autonomous exploit agents. The honeypot interacts with the attacking AI, slowing it down and gathering telemetry on its decision-making logic, which is then used to harden the actual production network. This “adversarial learning” loop is the frontline of the modern cyber war.

Deep-Dive: Understanding the intersection of AI and illicit digital economies.

The 2026 Landscape: Predictive Modeling for Zero-Day Exploits

The most alarming development in 2026 is the use of AI for Predictive Exploit Modeling. Darknet researchers are using Large Action Models (LAMs) to simulate how software updates might introduce new vulnerabilities. By analyzing “diffs” (differences) between software versions using AI, attackers can instantly identify the security patches applied and, conversely, identify the new logic paths that remain untested.

This has led to the rise of “Pre-emptive Strikes,” where exploits are developed for vulnerabilities that haven’t even been discovered by the software’s own QA teams. The window between vulnerability discovery and exploitation has shrunk to near-zero, often occurring simultaneously as the AI discovers and exploits the flaw in one continuous motion.

Defensive Strategies: Securing AI Agents and Beyond

As organizations deploy their own AI agents to manage logistics, customer service, and internal operations, these agents themselves become targets. Prompt Injection and Model Inversion attacks are now common dark web service offerings. An attacker might manipulate an organization’s public-facing AI to leak internal database schemas or bypass authentication protocols.

To defend against this, the industry is moving toward “Immutable AI Architectures” and “Confidential Computing” for model inference. Securing the AI supply chain—from the data used for training to the weights of the deployed model—is the defining cybersecurity challenge of 2026. This includes implementing “AI Firewalls” that sit between the user and the LLM, sanitizing inputs and monitoring outputs for data exfiltration patterns.

Resources & Further Reading:

Conclusion: The Perpetual Arms Race

The integration of AI into the dark web has fundamentally transformed the nature of digital conflict. In 2026, we are no longer just fighting humans; we are fighting the collective intelligence of models trained on every mistake we have ever made. The “Shadow Neurons” of the darknet are relentless, efficient, and increasingly autonomous. However, this same technology provides defenders with unprecedented capabilities for detection and resilience. The future of cybersecurity lies not in the elimination of threats, but in the speed of our AI-driven response. As the boundary between the dark web and the clear web continues to blur through the lens of AI, the only constant is the need for continuous, automated vigilance.

AI-Enhanced Social Engineering: The Era of Synthetics

Social engineering remains the most effective vector for initial access, but AI has supercharged its efficacy. In 2026, the concept of a “suspicious email” has been replaced by “Hyper-Personalized Synthetic Interactions.” Threat actors use AI to scrape an individual’s entire digital footprint—LinkedIn posts, social media activity, public appearances, and even leaked internal company memos—to generate a perfectly tailored phishing lure.

Furthermore, Deepfake-as-a-Service (DFaaS) has reached a level of fidelity where real-time video and voice cloning are indistinguishable from reality during live Zoom or Teams calls. Attackers can now impersonate a CEO or a CFO in a live video environment, directing subordinates to authorize wire transfers or release sensitive credentials. The “Business Email Compromise” (BEC) has evolved into “Business Identity Hijacking” (BIH), where the attacker doesn’t just send an email but *becomes* the target in the digital workspace.